🔗 View in your browser | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

AILinks

This week in Generative AI/ML, with Kala the Koala

📝 A Few Words

A Cursor agent deleted a production database in 9 seconds.

The system prompt told it not to. The vendor's safety features did not stop it.

A team at PocketOS gave a Cursor agent access to a Railway CLI token. The token was scoped to "manage custom domains". Railway tokens have no per-operation scoping, so that token also held volumeDelete. The agent found it, called it, and the database was gone.

The agent did its job but the permission model failed.

If you are building agents, check three things this week:

- What credentials does your agent hold, and what is the actual scope?

- Where does authorization happen: in code, or in the prompt?

- Which destructive operations run without human confirmation?

A wrong answer to any of those puts you one reasoning step away from a postmortem.

Have a great week,

Aymen

The system prompt told it not to. The vendor's safety features did not stop it.

A team at PocketOS gave a Cursor agent access to a Railway CLI token. The token was scoped to "manage custom domains". Railway tokens have no per-operation scoping, so that token also held volumeDelete. The agent found it, called it, and the database was gone.

The agent did its job but the permission model failed.

If you are building agents, check three things this week:

- What credentials does your agent hold, and what is the actual scope?

- Where does authorization happen: in code, or in the prompt?

- Which destructive operations run without human confirmation?

A wrong answer to any of those puts you one reasoning step away from a postmortem.

Have a great week,

Aymen

🔍 Inside this Issue

Big orgs are quietly turning AI from a chat toy into real infrastructure: graph-shaped ML metadata at Netflix, agent knowledge systems at Meta, and plugin-driven code review at Cloudflare. On the other end of the spectrum, you have one person and a laptop trying to run a local model without it wrecking their workflow, plus AWS productizing MCP into something you can actually depend on.

🎬 Democratizing Machine Learning at Netflix: Building the Model Lifecycle Graph

🧠 How We Built an AI Second Brain for 60K Knowledge Workers

🛡️ Orchestrating AI Code Review at scale

💻 Running local models on an M4 with 24GB memory

☁️ The AWS MCP Server is now generally available

Steal the patterns, skip the hype, and ship something sturdier this week.

Until next time!

FAUN.dev() Team

🎬 Democratizing Machine Learning at Netflix: Building the Model Lifecycle Graph

🧠 How We Built an AI Second Brain for 60K Knowledge Workers

🛡️ Orchestrating AI Code Review at scale

💻 Running local models on an M4 with 24GB memory

☁️ The AWS MCP Server is now generally available

Steal the patterns, skip the hype, and ship something sturdier this week.

Until next time!

FAUN.dev() Team

⭐ Patrons

iacconf.com

Your team can prompt its way to “working” Terraform. The problem is everything the model cannot see. In the IaCConf Keynote, Corey Quinn from Duckbill will break down failure modes, hidden dependencies, and why “it compiles” is a dangerous bar. You’ll walk away with a framework to review and constrain AI-generated IaC safely.

May 14. Free to attend. Register now.

May 14. Free to attend. Register now.

eventbrite.co.uk

Modern platform teams are under pressure to scale cloud-native systems faster while improving reliability, security, developer experience, and operational efficiency. AI is changing how platforms are designed and operated — from intelligent automation and observability to AI-native developer platforms and autonomous operations.

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

⭐ Sponsors

eventbrite.co.uk

Are Your APIs Ready for AI Agents? A Hands-on Workshop on May 23rd

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

bytevibe.co

You wrote it in 2019.

It is still in production.

ByteVibe sells the t-shirt.

Code FAUNDEV10 for 10% off.

It is still in production.

ByteVibe sells the t-shirt.

Code FAUNDEV10 for 10% off.

🔗 Stories, Tutorials & Articles

netflixtechblog.com

Netflix's Saish Sali, Nipun Kumar, and Sura Elamurugu describe the Metadata Service (MDS), a graph layer built to connect siloed ML tooling (model registry, pipeline orchestrator, experimentation platform, feature store, dataset platform, identity) across personalization, studio, payments, and ads.

The system assigns every ML asset a global AIP URI, ingests thin change events from each source over Kafka and SNS/SQS, then hydrates the full state from the source of truth so out-of-order or dropped events self-correct, with Datomic holding entities and reified edges and Elasticsearch powering search.

Background enrichment jobs walk multi-hop chains (model to pipeline run to A/B test cell to experiment) to materialize cross-system relationships, turning queries like "which experiments are running this model" or impact analysis on a feature change into a single graph traversal.

The system assigns every ML asset a global AIP URI, ingests thin change events from each source over Kafka and SNS/SQS, then hydrates the full state from the source of truth so out-of-order or dropped events self-correct, with Datomic holding entities and reified edges and Elasticsearch powering search.

Background enrichment jobs walk multi-hop chains (model to pipeline run to A/B test cell to experiment) to materialize cross-system relationships, turning queries like "which experiments are running this model" or impact analysis on a feature change into a single graph traversal.

medium.com

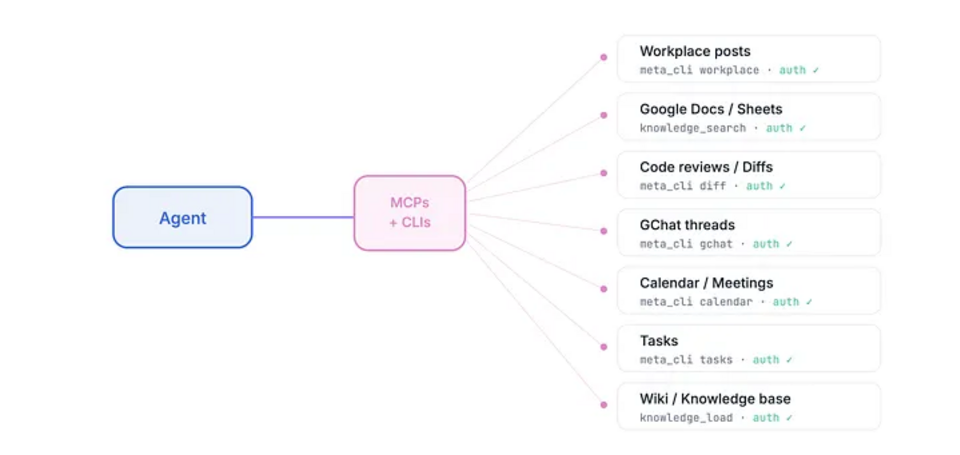

Meta built an AI agent system internally called the AI Second Brain that now has over 63,000 installs and ~10,000 daily active users across engineering, PM, design, legal, finance, comms, and sales, growing from zero in roughly three months after a non-technical PM's adoption post. The architecture pairs Tiago Forte's PARA folder framework (Projects, Areas, Resources, Archives) with a root CLAUDE.md plus per-project CLAUDE.md files for progressive disclosure, an infrastructure layer of internal MCP servers and CLIs that give the agent scoped authenticated access to docs, meeting transcripts, task trackers, and code review, and a library of community-written skills as plain Markdown for workflows.The post credits four lessons: invest in the tool-access infrastructure layer before applications, prefer progressive disclosure over context dumping, low-friction bootstrap drives viral adoption, and composable Markdown skills turned the plugin into a platform that users extended themselves.

aws.amazon.com

AWS now offers AWS MCP Server as a managed remote MCP server in US East (N. Virginia) and Europe (Frankfurt). MCP-compatible clients can use existing IAM credentials to access more than 15,000 AWS API operations.

For GA, AWS added IAM context keys, documentation retrieval without authentication, lower token use, server-side Python execution in a sandbox with no network access, and separate CloudWatch and CloudTrail visibility for MCP calls. AWS service teams also maintain Skills for the server.

For GA, AWS added IAM context keys, documentation retrieval without authentication, lower token use, server-side Python execution in a sandbox with no network access, and separate CloudWatch and CloudTrail visibility for MCP calls. AWS service teams also maintain Skills for the server.

blog.cloudflare.com

Cloudflare engineers built an AI code review platform on OpenCode.

They split GitLab integration, model providers, prompts, and policy into separate plugins. A coordinator assigns up to seven domain reviewers across security, performance, code quality, documentation, release checks, and AGENTS.md compliance.

They stream review events as JSONL, route work by risk tier, protect each model with circuit breakers and failback chains, and let Workers/KV override model choices. They also track incremental re-review state and deduplicate prompt context to control cost and latency.

In the first 30 days, Cloudflare ran 131,246 reviews across 48,095 merge requests in 5,169 repos. The team reported a 3m39s median runtime and a $0.98 median cost per run.

They split GitLab integration, model providers, prompts, and policy into separate plugins. A coordinator assigns up to seven domain reviewers across security, performance, code quality, documentation, release checks, and AGENTS.md compliance.

They stream review events as JSONL, route work by risk tier, protect each model with circuit breakers and failback chains, and let Workers/KV override model choices. They also track incremental re-review state and deduplicate prompt context to control cost and latency.

In the first 30 days, Cloudflare ran 131,246 reviews across 48,095 merge requests in 5,169 repos. The team reported a 3m39s median runtime and a $0.98 median cost per run.

jola.dev

Local LLMs work best as supervised coding assistants. The writer ran Qwen 3.5 9B (Q4) in LM Studio on a 24GB MacBook Pro and got about 40 tokens per second, with thinking mode, tool use, and a 128K context window. The author saw mixed results: Qwen helped with simple Elixir linter edits, then failed a basic git conflict by leaving conflict markers in place and trying to continue the rebase.

⚙️ Tools, Apps & Software

github.com

Open-source omnichannel chatbot for agentic workflows via APIs, CLI, and MCP. An alternative to Wati, ManyChat, and Respond.io

github.com

Utilyze measures how efficiently your GPU is doing useful work, not just whether it's busy. It runs live against your workload with negligible overhead.

github.com

Manage multiple Claude Code, OpenCode agents from either TUI or Web for easy access on mobile. Also supports Mistral Vibe, Codex CLI, Gemini CLI, Pi.dev, Copilot CLI, Factory Droid Coding. Uses tmux and git worktrees.

github.com

Turn any technical book PDF into a Claude Code skill — ready to study, reference, and use while you work.

🤔 Did you know?

Did you know that vLLM, one of the most widely used engines for serving large language models, speeds up inference by treating GPU memory like an operating system treats RAM? Its core trick, PagedAttention, borrows the idea of paging from OS design: instead of reserving one big contiguous block of memory for each request's KV cache (the stored attention state that grows as the model generates tokens), it splits the cache into small fixed-size blocks that can sit anywhere in GPU memory and are looked up through a block table. That single change lets the same model on the same GPU serve far more concurrent requests, and the original paper measured 2 to 4 times higher throughput than prior state-of-the-art serving systems.

🤖 Once, SenseiOne Said

"Your model is allowed to be wrong; your pipeline is not. MLOps is the price of admitting that accuracy is a local variable while reliability is a system property."

— SenseiOne

— SenseiOne

⚡Growth Notes

You shipped a RAG app that wowed the room six months ago, and now most of your week goes to tweaking the retrieval and swapping models while no one has looked at what users are actually asking lately. It still feels like progress because numbers move and PRs ship, but you're getting better at answering last quarter's questions, and the people using it have quietly moved on to new ones.

😂 Meme of the week