| | | 📝 A Few Words | | | | | | AI made writing automation cheap. It did nothing for running it.

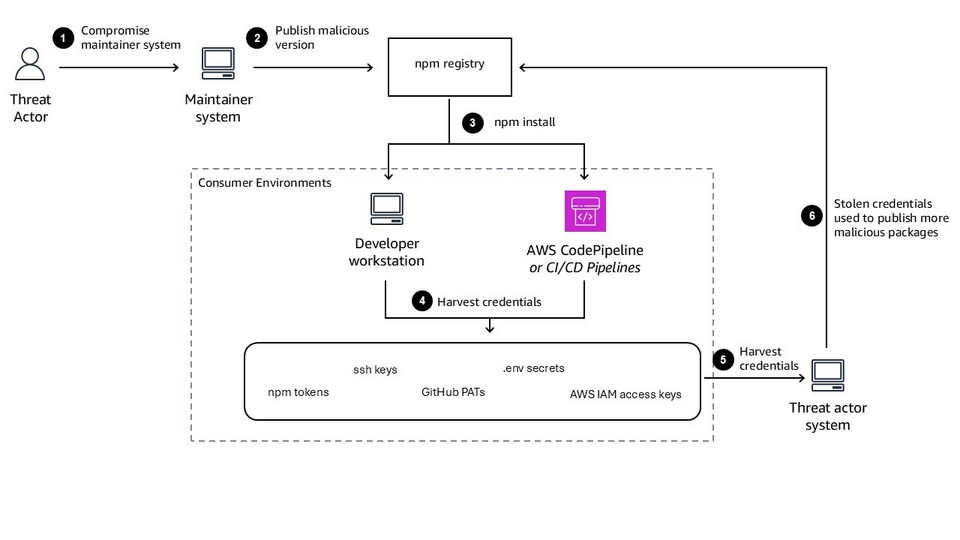

A model will hand you a working playbook in seconds. It will not tell you who's allowed to run it, against which inventory, with which credentials, on what schedule, or what happens when it dies halfway through 200 hosts. Authoring dropped to near zero but operating it didn't move.

That gap is widening: the more playbooks get vibecoded, and the more agents start firing them off on their own, the more you need a layer that decides what actually executes, with what privileges, and leaves a record when it does. That layer is AWX.

So I released a book about it. AWX in Action: Ansible Orchestration at Scale (expanded edition) is the practical guide: deploying AWX on Kubernetes with the operator, wiring up projects, credentials, RBAC, workflows, and execution environments, scaling past a single node, using the CLI, understanding the settings and much more!

The book is the half AI won't write for you. It's already the #1 Hot New Release in Distributed Systems & Computing on Amazon, which tells you how many people are stuck on the operating half.

You can get your copy on:

👉 FAUN.dev

👉 Amazon

Have a great week,

Aymen. |

| | | | |

|

|