FAUN.dev's Software Engineering Weekly Newsletter

🔗 View in your browser | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

🔗 View in your browser | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

SoftwareEngineeringLinks

This Week in Software Engineering, with Varbear the Bear

📝 A Few Words

The "AI makes engineers 10x faster" pitch is breaking.

A peer-reviewed RCT just showed experienced devs were 19% slower with AI tools.

They thought they were 20% faster.

I've written a book on AI-assisted coding and I train engineering teams on this almost every week. I'm pro-AI in the workflow. I'm not pro-lying about it.

Here's what the data actually says when you stack the studies:

👉 Juniors get a real boost. AI is a great tutor.

👉 Seniors slow down on their own codebases.

👉 Core maintainers review more code after AI adoption, while their own output drops.

👉 Team-level delivery stability drops even when individuals feel faster.

The mechanism is as simple as this: AI-generated code is fast to write and slow to review. The writer feels the speed, but the reviewer eats the cost. Inside a team, those are usually different people.

This is why "we shipped 90% more PRs this quarter" means nothing on its own. PRs are not throughput. Merged-and-stable code in production is throughput.

The asymmetry compounds:

👉 The junior ships fast.

👉 The senior gets slower reviewing AI-generated work they didn't write.

👉 The senior's own original output drops because review is now their full-time job.

👉 6 months later, the team thinks AI is working. The senior is burned out, and the codebase is full of debt they didn't choose.

So no, the answer is not "stop using AI". The answer is: measure team throughput, not individual velocity. Pay seniors for review quality, not just lines shipped, and treat AI-generated code as a draft that costs review time, not a finished asset.

The teams that figure this out in 2026 own 2027. The rest will spend 2027 paying down debt nobody can explain.

Have a great week!

Aymen

A peer-reviewed RCT just showed experienced devs were 19% slower with AI tools.

They thought they were 20% faster.

I've written a book on AI-assisted coding and I train engineering teams on this almost every week. I'm pro-AI in the workflow. I'm not pro-lying about it.

Here's what the data actually says when you stack the studies:

👉 Juniors get a real boost. AI is a great tutor.

👉 Seniors slow down on their own codebases.

👉 Core maintainers review more code after AI adoption, while their own output drops.

👉 Team-level delivery stability drops even when individuals feel faster.

The mechanism is as simple as this: AI-generated code is fast to write and slow to review. The writer feels the speed, but the reviewer eats the cost. Inside a team, those are usually different people.

This is why "we shipped 90% more PRs this quarter" means nothing on its own. PRs are not throughput. Merged-and-stable code in production is throughput.

The asymmetry compounds:

👉 The junior ships fast.

👉 The senior gets slower reviewing AI-generated work they didn't write.

👉 The senior's own original output drops because review is now their full-time job.

👉 6 months later, the team thinks AI is working. The senior is burned out, and the codebase is full of debt they didn't choose.

So no, the answer is not "stop using AI". The answer is: measure team throughput, not individual velocity. Pay seniors for review quality, not just lines shipped, and treat AI-generated code as a draft that costs review time, not a finished asset.

The teams that figure this out in 2026 own 2027. The rest will spend 2027 paying down debt nobody can explain.

Have a great week!

Aymen

🔍 Inside this Issue

AI agents are getting organized, but the blast radius is getting bigger too: today is equal parts orchestration patterns, supply-chain gotchas, and a reminder that boring code still wins. If you have ever shipped something clever and then spent a week paying it back, these links are going to feel uncomfortably useful.

🤖 6 Multi-Agent Orchestration Design Patterns Every Developer Should Know

🏗️ Adventures in 30 Years in Engineering Productivity

🧹 I Deleted My Clever Code and the Database Got Better

🧨 Skill Issues: How We Discovered Supply Chain Attack Vectors in an AI Agent Skills Marketplace

🫠 Slop Creep: The Great Enshittification of Software

Write less magic, ship more truth.

Until next time!

FAUN.dev() Team

🤖 6 Multi-Agent Orchestration Design Patterns Every Developer Should Know

🏗️ Adventures in 30 Years in Engineering Productivity

🧹 I Deleted My Clever Code and the Database Got Better

🧨 Skill Issues: How We Discovered Supply Chain Attack Vectors in an AI Agent Skills Marketplace

🫠 Slop Creep: The Great Enshittification of Software

Write less magic, ship more truth.

Until next time!

FAUN.dev() Team

⭐ Patrons

iacconf.com

Your team can prompt its way to “working” Terraform. The problem is everything the model cannot see. In the IaCConf Keynote, Corey Quinn from Duckbill will break down failure modes, hidden dependencies, and why “it compiles” is a dangerous bar. You’ll walk away with a framework to review and constrain AI-generated IaC safely.

May 14. Free to attend. Register now.

May 14. Free to attend. Register now.

eventbrite.co.uk

Modern platform teams are under pressure to scale cloud-native systems faster while improving reliability, security, developer experience, and operational efficiency. AI is changing how platforms are designed and operated — from intelligent automation and observability to AI-native developer platforms and autonomous operations.

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

⭐ Sponsors

eventbrite.co.uk

Are Your APIs Ready for AI Agents? A Hands-on Workshop on May 23rd

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

bytevibe.co

You wrote it in 2019.

It is still in production.

ByteVibe sells the t-shirt.

Code FAUNDEV10 for 10% off.

It is still in production.

ByteVibe sells the t-shirt.

Code FAUNDEV10 for 10% off.

🔗 Stories, Tutorials & Articles

boristane.com

The argument is that coding agents accelerate codebase decay by removing the natural speed limit on bad architectural decisions, compressing months of compounding mistakes into days. The defense is to invest ten times more in the planning phase, with concrete code snippets for the data models and abstractions that are one-way doors.

emmanuel326.github.io

A first-person walkthrough of rewriting an embedded key-value store after a friend spotted that the lock-free ring buffer was writing to a slot before claiming ownership, with the rebuilt single-mutex version 76 lines smaller, more correct, and explicit about every tradeoff (fsync on every write, no auto-compaction, no Options struct, lockfile for single-writer).

orca.security

Orca Security researchers identified four attack primitives in an AI coding-agent skills marketplace: install-count inflation without authentication, security scans at creation and popularity thresholds, same-name overrides without user alerts, and bulk updates without per-skill review or version pinning.

The researchers used three proofs of concept to combine those flaws into bait-and-switch, nested injection, and delayed weaponization attacks. In each case, an attacker could publish malicious Markdown skills, get them through audits, reach users, and execute code on user machines.

The researchers used three proofs of concept to combine those flaws into bait-and-switch, nested injection, and delayed weaponization attacks. In each case, an attacker could publish malicious Markdown skills, get them through audits, reach users, and execute code on user machines.

carloarg02.medium.com

Carlos Arguelles collects a decade of writing on engineering productivity at Microsoft, Amazon, and Google, covering monorepo versus microrepo CI/CD philosophies, hermetic test environments, ML-based bug deduplication, code coverage debates, and the AI-driven shift toward autonomous validation of generated code.

build5nines.com

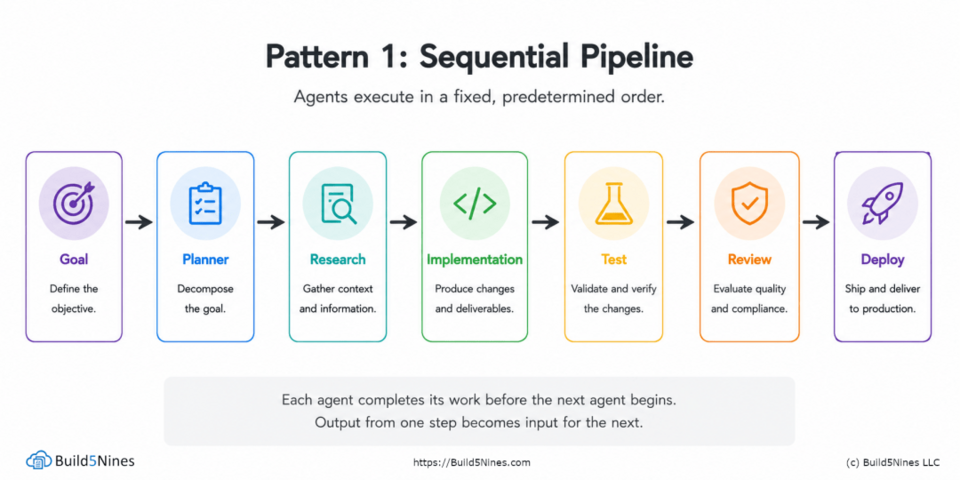

Chris Pietschmann walks through six multi-agent orchestration patterns (sequential pipeline, fan-out/fan-in, hierarchical delegation, consensus, event-driven, iterative refinement) and the state management primitives that let them compose.

⚙️ Tools, Apps & Software

github.com

A complete AI agency at your fingertips - From frontend wizards to Reddit community ninjas, from whimsy injectors to reality checkers. Each agent is a specialized expert with personality, processes, and proven deliverables.

github.com

Graphs that teach > graphs that impress. Turn any code, or knowledge base (Karpathy LLM wiki), into an interactive knowledge graph you can explore, search, and ask questions about. Works with Claude Code, Codex, Cursor, Copilot, Gemini CLI, and more.

🤔 Did you know?

Did you know that cbindgen, a tool Mozilla built to mix Rust into Firefox, automatically generates C and C++ header files from Rust source code so the two languages stay in sync as the codebase changes? It was created during work on WebRender, Firefox's Rust-based graphics engine, after hand-written bindings kept causing subtle bugs. Tools like this are how large projects safely adopt a new language piece by piece instead of rewriting everything at once.

🤖 Once, SenseiOne Said

"Every dev tool promises leverage, then quietly becomes a dependency you have to debug before you can debug your code. The best teams don't chase fewer bugs; they chase fewer layers where bugs can hide."

— SenseiOne

— SenseiOne

⚡Growth Notes

You got promoted because you were the person who could unblock anyone on the codebase, and now your calendar is 80% unblocking and 20% the work you were actually promoted to do. The title moved but the reflex didn't, and the org is quietly learning that your seniority means faster answers, not better systems.

😂 Meme of the week