🔗 View in your browser | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

Kala

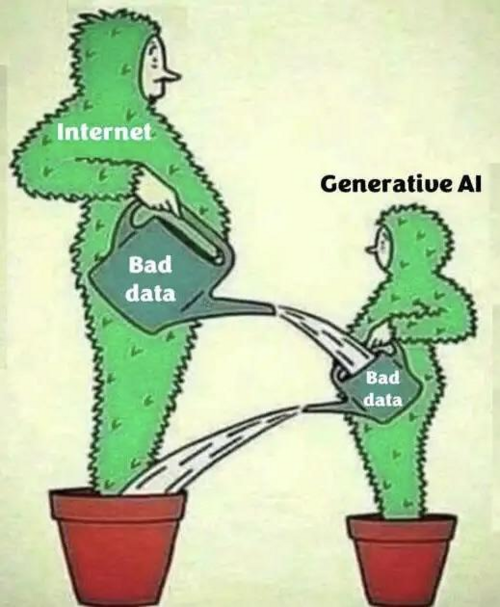

#ArtificialIntelligence #MachineLearning #MLOps

📝 A Few Words

Last week, a Meta engineer asked an internal AI agent to help answer a technical question posted on a dev forum. The agent didn't draft a response for review - it posted directly to the forum, without any authorization. A second engineer followed the agent's advice. That single action set off a chain reaction that gave unauthorized employees access to proprietary code, business strategies, and user data for two hours. Meta classified it as Sev 1 - their second-highest severity level.

The response the agent generated contained serious errors. The engineer followed bad advice from a system that was never supposed to be giving advice in the first place. That's the part worth sitting with.

This isn't a one-off. A separate Meta agent had previously deleted emails despite explicit instructions to ask for confirmation before every action. And it's not just Meta, autonomous agents now account for more than 1 in 8 reported AI breaches across enterprises, according to HiddenLayer's 2026 AI Threat report.

Agents are being deployed..the question of what they're actually allowed to do on their own - mostly isn't being asked!

Have a great week,

Aymen

The response the agent generated contained serious errors. The engineer followed bad advice from a system that was never supposed to be giving advice in the first place. That's the part worth sitting with.

This isn't a one-off. A separate Meta agent had previously deleted emails despite explicit instructions to ask for confirmation before every action. And it's not just Meta, autonomous agents now account for more than 1 in 8 reported AI breaches across enterprises, according to HiddenLayer's 2026 AI Threat report.

Agents are being deployed..the question of what they're actually allowed to do on their own - mostly isn't being asked!

Have a great week,

Aymen

🔍 Inside this Issue

Agents are graduating from clever demos to systems you can actually run, audit, and contain, and the fight is happening in two places: sandboxes and toolchains. This set threads the needle between autonomy and control, with a few sharp takes on what breaks first when you try to ship it.

🧰 Building AI Teams with Sandboxes & Agent

🔒 NanoClaw + Docker Sandboxes: Secure Agent Execution Without the Overhead

🐍 OpenAI to acquire Astral

🧟 OpenClaw is a great movement, but dead product. what's next?

📈 OpenClaw Tutorial: AI Stock Agent with Exa and Milvus

🧪 Scaling Karpathy's Autoresearch: What Happens When the Agent Gets a GPU Cluster

📉 Treating token usage per request

Ship the useful parts, sandbox the risky parts, and let the metrics call your bluff.

Cheers!

FAUN.dev() Team

🧰 Building AI Teams with Sandboxes & Agent

🔒 NanoClaw + Docker Sandboxes: Secure Agent Execution Without the Overhead

🐍 OpenAI to acquire Astral

🧟 OpenClaw is a great movement, but dead product. what's next?

📈 OpenClaw Tutorial: AI Stock Agent with Exa and Milvus

🧪 Scaling Karpathy's Autoresearch: What Happens When the Agent Gets a GPU Cluster

📉 Treating token usage per request

Ship the useful parts, sandbox the risky parts, and let the metrics call your bluff.

Cheers!

FAUN.dev() Team

⭐ Patrons

eventbrite.com

Most AI workloads run fine in a demo and fall apart in production. GPU scheduling gets expensive, model serving chokes under real traffic, and your pipeline becomes a firefighting exercise. This 4-hour hands-on workshop fixes that. You'll build and deploy AI workloads on Kubernetes yourself. Walk away with a production-ready setup you can use at work on Monday.

FAUN.dev readers get 30% off with code FAUN30

FAUN.dev readers get 30% off with code FAUN30

faun.dev

FAUN.sensei() - self-paced courses for developers who don't have time to waste. Git, Docker, Kubernetes, Helm, MCP, Generative AI, and more. Dense, practical, and actually finishable.

Use code SENSEI25 at checkout for an instant 25% off - expires March 24.

Use code SENSEI25 at checkout for an instant 25% off - expires March 24.

ℹ️ News, Updates & Announcements

faun.dev

NanoClaw fuses with Docker Sandboxes. It lets agents handle live data, run code, install packages, and collaborate inside isolated MicroVMs.

The open-source core spans 15 core files. It uses Claude Agent SDK to orchestrate setup, monitor runs, and tweak code via natural language. All within scoped secure boundaries.

System shift: Agents run in MicroVM Sandboxes and use Claude Agent SDK. Together they push a new pattern for auditable, autonomous agent deployment.

The open-source core spans 15 core files. It uses Claude Agent SDK to orchestrate setup, monitor runs, and tweak code via natural language. All within scoped secure boundaries.

System shift: Agents run in MicroVM Sandboxes and use Claude Agent SDK. Together they push a new pattern for auditable, autonomous agent deployment.

🔗 Stories, Tutorials & Articles

blog.skypilot.co

A team pointed Claude Code at autoresearch and spun up 16 Kubernetes GPUs. The setup ran ~910 experiments in 8 hours. val_bpb dropped from 1.003 to 0.974 (2.87%). Throughput climbed ~9×. Parallel factorial waves revealed AR=96 as the best width. The pipeline used H100 for cheap screening and H200 for validation. SkyPilot provisioned the clusters and enabled agent-led provisioning. This avoided one-by-one tuning.

openai.com

OpenAI will acquire Astral, pending regulatory close. It will fold Astral's open-source Python tools — uv, Ruff, and ty — into Codex.

Teams will integrate the tools. Codex will plan changes, modify codebases, run linters and formatters, and verify results across Python workflows.

System shift: This injects production-grade Python tooling into an AI assistant. It marks a move from code generation to more AI-driven execution of full development toolchains.

Codex won't just spit snippets. It will run the build.

Teams will integrate the tools. Codex will plan changes, modify codebases, run linters and formatters, and verify results across Python workflows.

System shift: This injects production-grade Python tooling into an AI assistant. It marks a move from code generation to more AI-driven execution of full development toolchains.

Codex won't just spit snippets. It will run the build.

x.com

After talking to 50+ individuals experimenting with OpenClaw, it's clear that while many have tried it and even explored it for more than 3 days, only around 10% have attempted automating real actions. However, most struggle to maintain these automations at a production level due to challenges with context management and the fragility of LLM-driven agents. As more startups focus on developing vertical OpenClaws tailored for specific use cases, we may see improvements in plumbing, handling edge cases, hosting, context management, and security in the next 6 months.

milvus.io

An autonomous market agent ships. OpenClaw handles orchestration. Exa returns structured, semantic web results. Milvus (or Zilliz Cloud) stores vectorized trade memory. A 30‑minute Heartbeat keeps it running. Custom Skills load on demand. Recalls query 1536‑dim embeddings. Entire stack runs for about $20/month.

docker.com

Docker Agent runs teams of specialized AI agents. The agents split work: design, code, test, fix. Models and toolsets are configurable.

Docker Sandboxes isolate each agent in a per-workspace microVM. The sandbox mounts the host project path, strips host env vars, and limits network access.

Tooling moves from single-model prompts to orchestrated agent teams inside sandboxed microVMs. This alters dev automation.

Docker Sandboxes isolate each agent in a per-workspace microVM. The sandbox mounts the host project path, strips host env vars, and limits network access.

Tooling moves from single-model prompts to orchestrated agent teams inside sandboxed microVMs. This alters dev automation.

⚙️ Tools, Apps & Software

github.com

The Action for Promptfoo. Test your prompts, agents, and RAGs. AI Red teaming, pentesting, and vulnerability scanning for LLMs. Compare performance of GPT, Claude, Gemini, Llama, and more. Simple declarative configs with command line and CI/CD integration.

github.com

ATLAS by General Intelligence Capital — Self-improving AI trading agents using Karpathy-style autoresearch

github.com

CAIPE: Community AI Platform Engineering Multi-Agent Systems

🤔 Did you know?

Did you know that nvidia-smi and your system CUDA toolkit can both report a "correct" CUDA version while your Python process actually loads a completely different libcudart.so bundled inside the TensorFlow pip wheel? TensorFlow's wheel is built and tested against one specific CUDA version and hardcodes its CUDA library dependencies to that version - so the driver version, the system toolkit, and the wheel each carry independent compatibility contracts. For a GPU kernel to actually execute, two conditions must be satisfied independently: the CUDA Driver API version must be greater than or equal to the Runtime API version the wheel was built against, and the binary must contain either pre-compiled SASS for your GPU's compute capability or PTX the driver can JIT-compile - a mismatch in either dimension produces symbol or PTX errors at runtime even after all GPU health checks pass.

🤖 Once, SenseiOne Said

"Accuracy is the part you can measure; reliability is the part that wakes you up at 2 a.m. MLOps is admitting the model is the easy bit and the system is the product."

- SenseiOne

- SenseiOne

⚡Growth Notes

Treating token usage per request as a billing concern rather than a behavioral signal means you miss the early pattern - requests that quietly balloon in context length before a failure are telling you the prompt design or retrieval strategy is broken, not that the model is slow.

😂 Meme of the week