🔗 View in your browser. | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

DevOpsLinks

This week in DevOps, with Dolly the Cow

📝 A Few Words

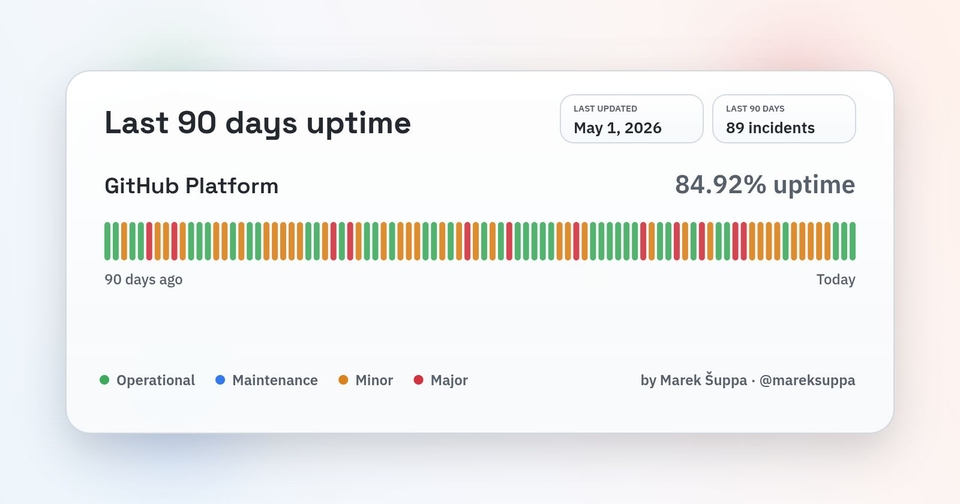

84.92%.

That's GitHub's real uptime over the last 90 days. Not the 99.something the official status page claims.

The reconstructed one, built by Marek Šuppa from public incident data, tells a different story.

👉 89 incidents in 90 days = 1 every day, on average.

Why now, after 15 years of being the default?

The answer lies in the traffic mix: GitHub used to have predictable cadence and load as it was built for humans: commits, pushes, PR, CI, reviews, merges, and the day-to-day operations.

🤖 Then we plugged in agents.

Copilot fires requests on every keystroke. Coding agents auto-open PRs and auto-merge. Bots review, label, triage, and rerun pipelines without sleeping. Where one engineer used to fire ten API calls an hour, an agent fires hundreds.

I'm living this. Some of my open source repos get agent-opened PRs almost every day, and I've stopped being able to keep up with them.

GitHub's own postmortems blame "rapid load growth" and a system where one broken piece takes the rest with it.

But this isn't a GitHub problem. It's the first visible crack in infrastructure that was never designed for non-human users. Package registries, CI providers, container registries, secret managers: they're next.

GitHub won't be the last platform to crack under agent!

Have a great week,

Aymen

That's GitHub's real uptime over the last 90 days. Not the 99.something the official status page claims.

The reconstructed one, built by Marek Šuppa from public incident data, tells a different story.

👉 89 incidents in 90 days = 1 every day, on average.

Why now, after 15 years of being the default?

The answer lies in the traffic mix: GitHub used to have predictable cadence and load as it was built for humans: commits, pushes, PR, CI, reviews, merges, and the day-to-day operations.

🤖 Then we plugged in agents.

Copilot fires requests on every keystroke. Coding agents auto-open PRs and auto-merge. Bots review, label, triage, and rerun pipelines without sleeping. Where one engineer used to fire ten API calls an hour, an agent fires hundreds.

I'm living this. Some of my open source repos get agent-opened PRs almost every day, and I've stopped being able to keep up with them.

GitHub's own postmortems blame "rapid load growth" and a system where one broken piece takes the rest with it.

But this isn't a GitHub problem. It's the first visible crack in infrastructure that was never designed for non-human users. Package registries, CI providers, container registries, secret managers: they're next.

GitHub won't be the last platform to crack under agent!

Have a great week,

Aymen

🔍 Inside this Issue

One port left open, one month of logs, and suddenly the internet looks a lot less random and a lot more automated. Pair that with sober incident prep, a reality check on queues, and a couple of infrastructure moves that feel like cheating (in a good way).

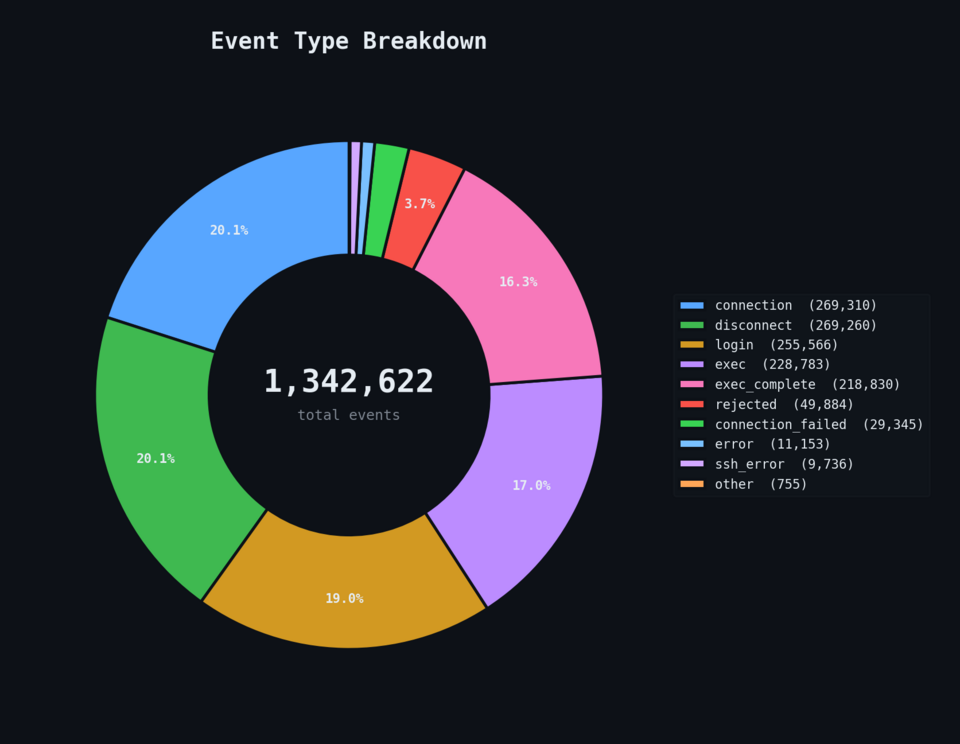

🕵️ I Left Port 22 Open on the Internet for 54 Days. Here's Who Showed Up.

🚨 Incidents Will Happen. Are You (Actually) Prepared?

🧱 Why Queues Don’t Fix Scaling Problems

💸 Migrating from DigitalOcean to Hetzner: From $1,432 to $233/month With Zero Downtime

🗂️ S3 Files and the changing face of S3

⚙️ Jira Automation Best Practices That Will Save You Time

Go make future-you a little harder to surprise.

Happy coding!

FAUN.dev() Team

🕵️ I Left Port 22 Open on the Internet for 54 Days. Here's Who Showed Up.

🚨 Incidents Will Happen. Are You (Actually) Prepared?

🧱 Why Queues Don’t Fix Scaling Problems

💸 Migrating from DigitalOcean to Hetzner: From $1,432 to $233/month With Zero Downtime

🗂️ S3 Files and the changing face of S3

⚙️ Jira Automation Best Practices That Will Save You Time

Go make future-you a little harder to surprise.

Happy coding!

FAUN.dev() Team

⭐ Patrons

iacconf.com

Your team can prompt its way to “working” Terraform. The problem is everything the model cannot see. In the IaCConf Keynote, Corey Quinn from Duckbill will break down failure modes, hidden dependencies, and why “it compiles” is a dangerous bar. You’ll walk away with a framework to review and constrain AI-generated IaC safely.

May 14. Free to attend. Register now.

May 14. Free to attend. Register now.

eventbrite.co.uk

Modern platform teams are under pressure to scale cloud-native systems faster while improving reliability, security, developer experience, and operational efficiency. AI is changing how platforms are designed and operated — from intelligent automation and observability to AI-native developer platforms and autonomous operations.

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

Join leading experts from WSO2, CNCF, cloud-native, and DevSecOps communities for a practical workshop focused on building scalable, secure, and intelligent AI-native platforms.

Register Here: Building AI-Native Platform Engineering Systems Tickets, Saturday, May 30 • 7 PM - 11:59 PM GMT+5 | Eventbrite

🐾 From FAUNers

faun.dev

The author explains Jira automation limits: Free plans include 100 runs per month, Premium plans include 1,000 runs per user per month, and Enterprise plans have no run cap. Atlassian counts a run after a triggered rule completes at least one action. To reduce usage, the author suggests narrow triggers, early conditions, combined actions, disabling unused rules, and using marketplace apps for niche workflows.

⭐ Sponsors

eventbrite.co.uk

Are Your APIs Ready for AI Agents? A Hands-on Workshop on May 23rd

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

AI agents are beginning to autonomously call APIs, chain services, and create integrations that most platforms were never designed to handle. This hands-on masterclass on Designing AI-ready APIs helps architects and developers build governed, predictable API ecosystems using OpenAPI, Overlay, and Arazzo.

Learn how to add guardrails, improve discoverability, and safely evolve existing APIs for automated consumption.

FAUN.dev readers get an exclusive 40% discount using code FAUN40.

bytevibe.co

kubectl get pods.

kubectl logs.

kubectl describe.

kubectl apply.

kubectl exec.

You typed it 3,413 times this week. Wear it!

Code FAUNDEV10 for 10% off.

kubectl logs.

kubectl describe.

kubectl apply.

kubectl exec.

You typed it 3,413 times this week. Wear it!

Code FAUNDEV10 for 10% off.

🔗 Stories, Tutorials & Articles

hashnode.dev

A 54-day SSH honeypot on port 22 logged 268,000+ login attempts from 7,556 IPs, with 99.6% of attackers running a single automated fingerprinting command and only 28 ever opening an interactive shell. The data shows hardcoded IoT credentials and Solana validator hunting dominating the password lists, a single Belgian residential IP firing 156,000 attempts on its own, and a small professional tier using /dev/tcp/ socket tricks with rotating C2 infrastructure to drop UPX-packed ELF binaries.

uptimelabs.io

Joe Mckevitt, CTO of Uptime Labs, argues that incident prevention and incident preparation are not substitutes, and that organizations relying on the heroic engineer who knows the infrastructure at 2am have a habit, not a strategy. The piece pushes for a deliberate playbook (practiced communication, pre-understood failure behavior, repeatable response) over runbooks decaying in Confluence, and notes that AI in the execution path will reshape failure modes rather than reduce the need for human-led preparation.

isayeter.com

A walkthrough of migrating 248 GB of MySQL across 30 databases, 34 Nginx sites, GitLab EE, and Neo4j from a $1,432/month DigitalOcean droplet to a $233/month Hetzner AX162-R dedicated box with no downtime.

The path: mydumper/myloader with 32 threads for the bulk MySQL 5.7 to 8.0 import, master-to-replica sync from a recorded binlog position with slave_exec_mode = IDEMPOTENT to bypass 1062 errors, scripted DNS TTL reduction to 300s on A/AAAA records only, converting the old Nginx configs into reverse proxies during propagation, and a final flip of all A records via the DigitalOcean API.

The path: mydumper/myloader with 32 threads for the bulk MySQL 5.7 to 8.0 import, master-to-replica sync from a recorded binlog position with slave_exec_mode = IDEMPOTENT to bypass 1062 errors, scripted DNS TTL reduction to 300s on A/AAAA records only, converting the old Nginx configs into reverse proxies during propagation, and a final flip of all A records via the DigitalOcean API.

dzone.com

Queues do not create capacity, they delay the moment insufficient capacity becomes visible, and sustained overload turns a queue from a smoothing buffer into a cascading failure that takes down databases, connection pools, and consumer instances before it ever hits the queue's own limits.

allthingsdistributed.com

AWS launched S3 Files, an EFS-backed feature that mounts any S3 bucket or prefix as an NFS filesystem on EC2, containers, or Lambda, with changes batched back to S3 roughly every 60 seconds.

Rather than collapsing file and object semantics into a single model (an early design attempt called "EFS3" that the team abandoned after deciding it produced only the lowest common denominator), Andy Warfield describes a "stage and commit" architecture borrowed from version control: EFS holds the live filesystem view, S3 stays the source of truth on conflict, and key names that can't be represented in both worlds emit events instead of failing.

Rather than collapsing file and object semantics into a single model (an early design attempt called "EFS3" that the team abandoned after deciding it produced only the lowest common denominator), Andy Warfield describes a "stage and commit" architecture borrowed from version control: EFS holds the live filesystem view, S3 stays the source of truth on conflict, and key names that can't be represented in both worlds emit events instead of failing.

⚙️ Tools, Apps & Software

github.com

Automatically completes the full workflow from requirement research → research review → planning → plan review → development → development review using → test AI large language models. Capable of autonomously handling medium to large-scale engineering projects.

github.com

Live Docker & Kubernetes infrastructure visualization - containers, pods, volumes, and networks in one visual map. No VPN, no inbound ports.

🤔 Did you know?

Did you know that when you run kubectl rollout status and it says your deployment succeeded, all it actually means is that enough Pods passed their readiness probes? It does not guarantee that those Pods are receiving traffic yet. Traffic in Kubernetes flows through a separate object called an EndpointSlice (the list of Pod IPs a Service or Ingress actually routes to), and it can take seconds to a couple of minutes for new Pods to propagate through kube-proxy and your ingress controller. This is why robust release pipelines verify real traffic against the new version rather than trusting the rollout command alone.

🤖 Once, SenseiOne Said

"Cloud makes capacity feel infinite, so teams stop measuring it and start paying for their assumptions. SRE is just accounting with better dashboards: every shortcut becomes a recurring charge. Automation doesn't remove toil; it compounds it at scale."

— SenseiOne

— SenseiOne

⚡Growth Notes

Your Terraform repo has 40,000 lines, 6 engineers can deploy to prod, and the one person who actually understands the network module is on vacation this week. Nothing is on fire, so it doesn't feel like a problem, but every incident response, every new hire ramp, and every architecture decision is now silently routed through one person's calendar.

😂 Meme of the week