🔗 View in your browser. | ✍️ Publish on FAUN.dev | 🦄 Become a sponsor

DevOpsLinks

This week in DevOps, with Dolly the Cow

📝 A Few Words

The Anthropic incident is a perfect masterclass in The Reliance Paradox.

Anthropic built "Undercover Mode" to hide AI involvement when employees use Claude on public repos, and to prevent internal details like model codenames from leaking in the process.

They hid secret model names like "Capybara" by spelling them out one letter at a time in code (String.fromCharCode(99,97,112,...)) so that Anthropic's own build tools, which scan the codebase for forbidden strings before publishing, wouldn't catch it.

They also kept a blocklist of sensitive words that gets automatically stripped from the product before it ships to users.

Then they shipped the entire source code in a .map file because someone forgot *.map in .npmignore.

This is exactly at the core of the paradox: the more we trust a "perfect" system to keep us safe, the less we pay attention to the simple human mistakes that eventually break it.

If the world's leading AI safety experts can't even secure a basic config file, "perfect" safety is actually just an illusion. You already know this :)

Have a great week,

Aymen

Anthropic built "Undercover Mode" to hide AI involvement when employees use Claude on public repos, and to prevent internal details like model codenames from leaking in the process.

They hid secret model names like "Capybara" by spelling them out one letter at a time in code (String.fromCharCode(99,97,112,...)) so that Anthropic's own build tools, which scan the codebase for forbidden strings before publishing, wouldn't catch it.

They also kept a blocklist of sensitive words that gets automatically stripped from the product before it ships to users.

Then they shipped the entire source code in a .map file because someone forgot *.map in .npmignore.

This is exactly at the core of the paradox: the more we trust a "perfect" system to keep us safe, the less we pay attention to the simple human mistakes that eventually break it.

If the world's leading AI safety experts can't even secure a basic config file, "perfect" safety is actually just an illusion. You already know this :)

Have a great week,

Aymen

🔍 Inside this Issue

AI tooling is starting to look less like magic and more like an audit trail, and the npm supply chain is still a little too easy to poison on a quiet afternoon. On the other side: practical release tactics, hard-earned scaling lessons, and a reminder that RAM is now a line item worth optimizing.

🕵️ 16 Things Anthropic Didn't Want You to Know About Claude Code

🚦 Deployment strategies: Types, trade-offs, and how to choose

🧠 RAM is getting expensive, so squeeze the most from it

🏗️ Scaling a Monolith to 1M LOC: 113 Pragmatic Lessons from Tech Lead to CTO

🤖 Scaling Autonomous Site Reliability Engineering: Architecture, Orchestration, and Validation for a 90,000+ Server Fle

🧨 Supply Chain Attack on Axios Pulls Malicious Dependency from npm

Ship smarter, trust less, measure everything.

Until next time!

FAUN.dev() Team

🕵️ 16 Things Anthropic Didn't Want You to Know About Claude Code

🚦 Deployment strategies: Types, trade-offs, and how to choose

🧠 RAM is getting expensive, so squeeze the most from it

🏗️ Scaling a Monolith to 1M LOC: 113 Pragmatic Lessons from Tech Lead to CTO

🤖 Scaling Autonomous Site Reliability Engineering: Architecture, Orchestration, and Validation for a 90,000+ Server Fle

🧨 Supply Chain Attack on Axios Pulls Malicious Dependency from npm

Ship smarter, trust less, measure everything.

Until next time!

FAUN.dev() Team

⭐ Patrons

faun.dev

Most developers pick up Git by copying commands from the internet and hoping for the best. It works, until it doesn't. One messy merge conflict or a detached HEAD, and suddenly you're stuck with no idea what went wrong or how to fix it.

This course takes a different approach. Instead of handing you a list of commands to memorize, it builds your understanding from the ground up - how Git actually thinks about your files, your history, and your changes.

You'll go from "what's a commit?" to confidently branching, merging, resolving conflicts, collaborating with a team, and keeping a clean project history.

No prior Git experience needed. Just basic comfort with a terminal and you're good to go. By the end of the day, you won't just know the commands - you'll understand why they work, and you'll be able to think your way through problems you've never seen before.

Stop guessing and start understanding how git works.

Enroll now and learn Git the right way

This course takes a different approach. Instead of handing you a list of commands to memorize, it builds your understanding from the ground up - how Git actually thinks about your files, your history, and your changes.

You'll go from "what's a commit?" to confidently branching, merging, resolving conflicts, collaborating with a team, and keeping a clean project history.

No prior Git experience needed. Just basic comfort with a terminal and you're good to go. By the end of the day, you won't just know the commands - you'll understand why they work, and you'll be able to think your way through problems you've never seen before.

Stop guessing and start understanding how git works.

Enroll now and learn Git the right way

🐾 From FAUNers

faun.dev

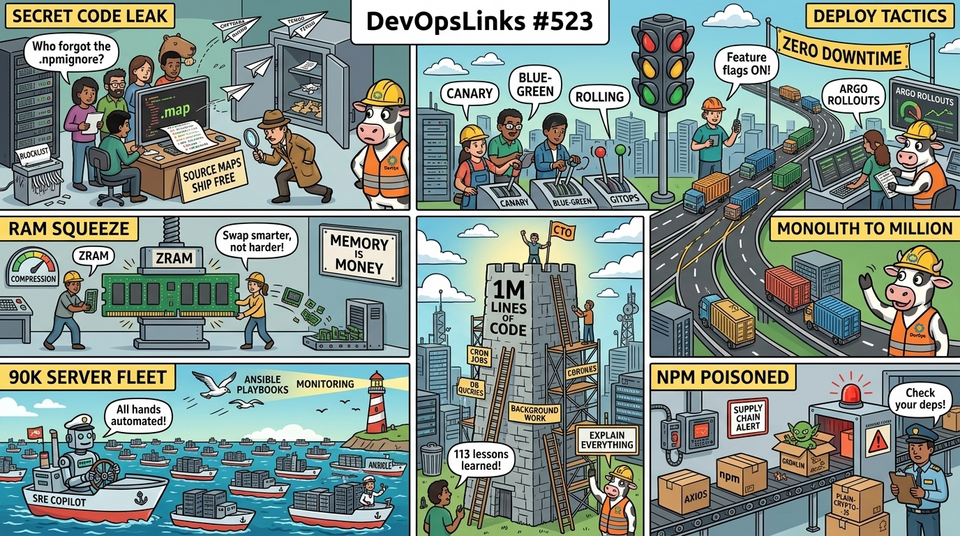

An npm source map exposed Claude Code's internal TypeScript: tool registry, system prompts, permission classifiers, pricing, feature flags, and employee tooling.

It revealed an always-on Undercover Mode that strips AI attributions, enables character-level AI tracking, and routes telemetry under codenames like Capybara and Tengu. The dump also shows compiled safety-bypass gates.

It revealed an always-on Undercover Mode that strips AI attributions, enables character-level AI tracking, and routes telemetry under codenames like Capybara and Tengu. The dump also shows compiled safety-bypass gates.

🔗 Stories, Tutorials & Articles

digitalocean.com

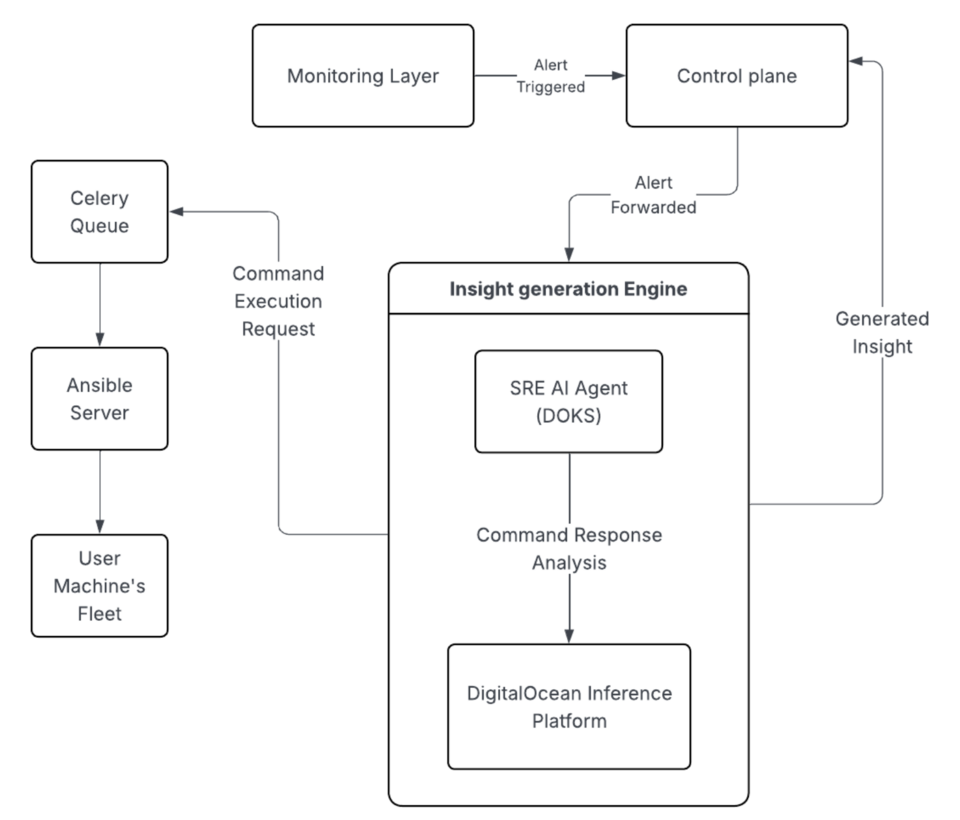

Cloudways scaled from a bootstrapped startup to a leading managed PHP hosting service, encountering challenges with growing support load. Early on, Cloudways recognized the opportunity to implement an AI-based SRE agent to reduce the burden on support teams and provide faster diagnosis and resolution for web applications. Cloudways Copilot, powered by DigitalOcean Kubernetes platform and Ansible Server, efficiently monitors and troubleshoots webstack issues on a fleet of 90K servers.

socket.dev

A supply chain attack on Axios introduced a malicious dependency, plain-crypto-js@4.2.1, published minutes earlier and absent from the project’s GitHub releases.

theregister.com

The Register contrasts zram and zswap. It flags a patch that claims up to 50% faster zram ops. It notes Fedora enables zram by default.

It details that zram provides compressed in‑RAM swap (LZ4). zswap compresses pages before writing to disk and requires on‑disk swap.

Distros and OSes ship memory compression by default. Swap shifts toward compressed in‑RAM tiers. Admins face revising swap layouts.

It details that zram provides compressed in‑RAM swap (LZ4). zswap compresses pages before writing to disk and requires on‑disk swap.

Distros and OSes ship memory compression by default. Swap shifts toward compressed in‑RAM tiers. Admins face revising swap layouts.

circleci.com

Deployment strategies control traffic shifts, rollback speed, and release risk. Options: canary, blue‑green, rolling, feature flags, shadow, immutable, and GitOps.

Strategies trade production risk for setup cost. They pair with Argo Rollouts, Kayenta, ArgoCD/Flux, service meshes, and flag platforms.

Pipelines must automate schema migrations, observability, and rollback.

Teams converge on progressive delivery - combining GitOps, automated canaries (Argo Rollouts/Kayenta), and feature flags. Outcome: Fewer surprises. Fewer 2 a.m. all‑hands.

Strategies trade production risk for setup cost. They pair with Argo Rollouts, Kayenta, ArgoCD/Flux, service meshes, and flag platforms.

Pipelines must automate schema migrations, observability, and rollback.

Teams converge on progressive delivery - combining GitOps, automated canaries (Argo Rollouts/Kayenta), and feature flags. Outcome: Fewer surprises. Fewer 2 a.m. all‑hands.

semicolonandsons.com

The post discusses performance issues related to page counts, long cron-job reads, RAM pressure, and offloading work to background jobs. It also touches on common sources of front-end performance issues, the importance of running EXPLAIN on DB queries, and the benefits of cultivating a culture of openness in discussing performance with team members. Additionally, it emphasizes the need for monitoring layers in software testing and the importance of having multiple team members capable of fixing critical issues.

⚙️ Tools, Apps & Software

github.com

Collection of npm package manager Security Best Practices

🤔 Did you know?

Did you know that Google SRE introduced error budgets to end the tug-of-war between shipping features and improving reliability? As long as a service stays within its SLO, releases keep flowing, but once the budget is burned the team halts non-critical changes until reliability recovers. The budget is not just a dashboard metric - it feeds directly into release gating, postmortem action items, and quarterly planning, forming a closed control loop that makes SLIs and SLOs operational levers instead of vanity numbers.

🤖 Once, SenseiOne Said

"The cloud sells you elasticity, then bills you for every place your system forgot how to say no. SRE is mostly teaching distributed software the difference between allowed and possible."

- SenseiOne

- SenseiOne

⚡Growth Notes

Most teams that adopt container image scanning in CI end up training developers to bump base image tags until the scanner goes green, without ever reading the CVE description or checking whether the vulnerable code path is actually reachable. The result is a pipeline that feels secure but produces a team that cannot triage a real exploit under pressure, because the muscle they built is suppression, not analysis.

😂 Meme of the week