| |

| 🔗 Stories, Tutorials & Articles |

| |

|

| |

| Introducing Claude Opus 4.8 |

| |

| |

| Claude Opus 4.8 delivers top-tier performance with honest and powerful collaboration, outpacing prior models and GPT-5.5 across multiple benchmarks. Opus 4.8's cutting-edge abilities and improved judgment set a new standard for enterprise AI, enhancing reliability and reasoning quality, ready for immediate use at the same pricing as Opus 4.7. |

|

| |

|

| |

|

| |

| Building a Continuous Conversational Insights Pipeline in BigQuery |

| |

| |

| This deep dive reveals a cutting-edge conversational analytics pipeline using Google Cloud and BigQuery to tackle multi-departmental data segmentation challenges with a hybrid semantic filtering approach. By pre-segmenting data and running targeted models, the pipeline uncovers granular insights often lost in global noise, ensuring operational excellence and driving business impact at scale. |

|

| |

|

| |

|

| |

| Qwen3.7-Plus: Multimodal Agent Intelligence |

| |

| |

| Qwen3.7-Plus is a powerful multimodal agent that seamlessly blends GUI and CLI interactions, excelling in coding, tool use, and productivity workflows. It generalizes across diverse agent frameworks, delivering competitive text performance and strong reasoning abilities across challenging STEM benchmarks. |

|

| |

|

|

| |

|

| |

| Top 7 Python Libraries for Large-Scale Data Processing |

| |

| |

| This article covers Python libraries that make large-scale data processing faster, more scalable, and easier to manage across modern data workflows. |

|

| |

|

| |

|

| |

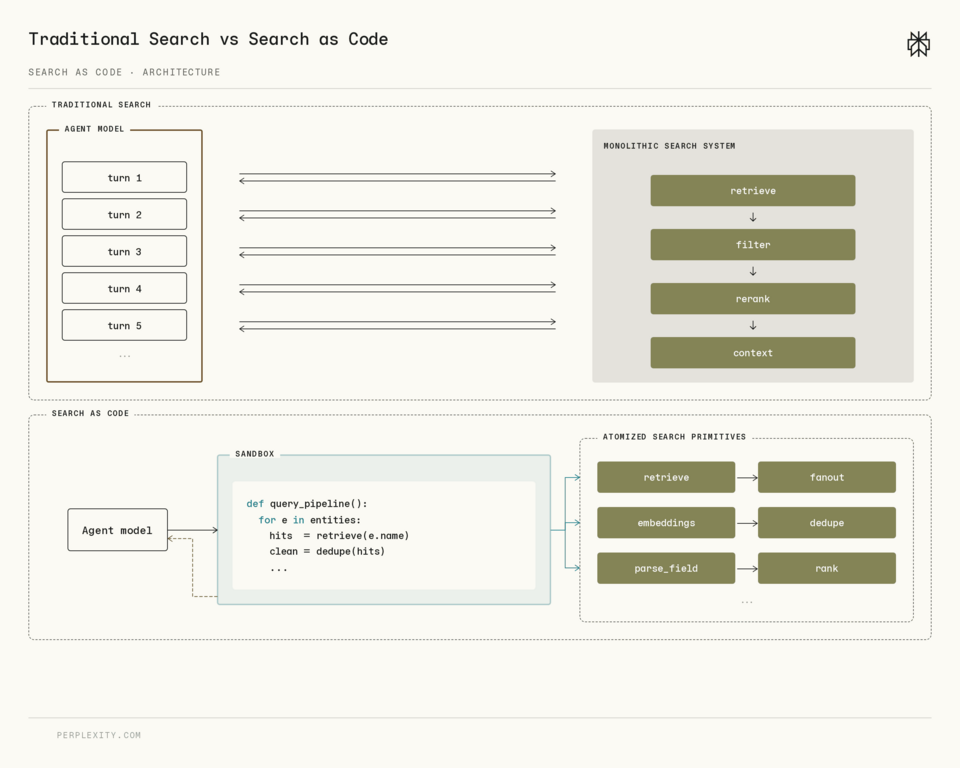

| Rethinking Search as Code Generation |

| |

| |

| Perplexity's engineers introduced Search as Code, and developers use its Python SDK to call low-level retrieval primitives instead of sending queries to one search endpoint. |

|

| |

|

|

| |

👉 Got something to share? Create your FAUN Page and start publishing your blog posts, tools, and updates. Grow your audience, and get discovered by the developer community. |