| | | 🔗 Stories, Tutorials & Articles | | | | | | | Create Custom MCP Catalogs and Profiles | | | | | | Docker made Custom Catalogs and Profiles available for MCP servers. Admins can distribute server catalogs they approve, and teams can package per-developer configurations as OCI artifacts. |

| | | | | | | | | | How Code works in large codebases: Best practices and where to start ✅ | | | | | Anthropic breaks down the patterns behind successful Claude Code rollouts in monorepos, legacy systems, and codebases spanning thousands of developers, arguing that Claude Code performs agentic search over a live filesystem instead of relying on a RAG index that drifts out of sync with active engineering teams.

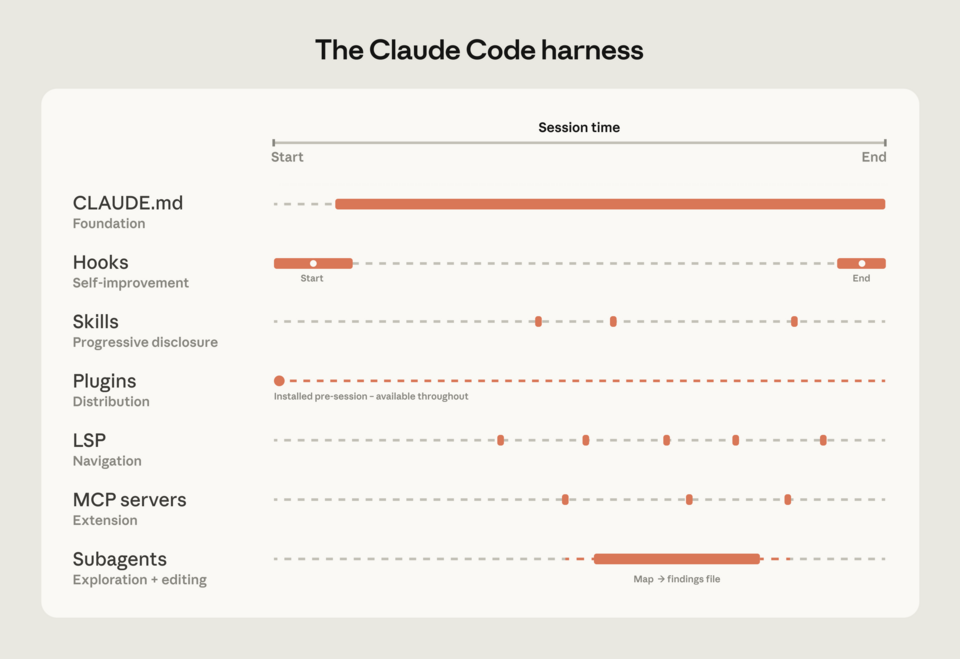

How you configure Claude matters more than the model itself. CLAUDE.md files, hooks, skills, plugins, MCP servers, LSP integrations, and subagents all load context in different ways. Teams that combine them with intent, instead of dumping everything into CLAUDE.md, navigate large codebases much better. |

| | | | | | | | | | | Claude’s next enterprise battle is not models: it’s the agent control plane | | | | | | New data shows Microsoft and OpenAI leading agent orchestration, but Anthropic's rising stake signals a shift in control of AI infrastructure. Anthropic's move from model to orchestration layer hints at a strategic battle over agent runtime platforms where operational AI work happens. |

| | | | | | | | | | AI Is Doing the Testing Now | | | | | Brijesh Deb's third "comfortable lie" of software testing is that AI is now doing the testing: coverage dashboards hit 80%+, regression suites maintain themselves, and leadership concludes that risk is handled, while the experienced testers who knew the domain quietly get redeployed or made redundant.

His core distinction is that AI predicts from what already exists (code, docs, historical tests) but cannot exercise testing judgement, which lives in the gap between what is documented and what real users actually do, including the undocumented business rules nobody thought to write down.

He illustrates this with a fintech case where AI-generated tests covered every documented requirement perfectly, then a regional payment failure surfaced from a sequencing rule held only as tacit knowledge by two ops people, and argues AI works well only as an amplifier of human judgement, not a replacement for the person whose job is to ask whether the green dashboard is covering the right things. |

| | | | | | | | | | Tokenomics: the 62.5-minute rule for Claude's cache ✅ | | | | | Ryan Skidmore works out the tokenomics of Anthropic's prompt cache and lands on a single rule: if you expect to need a cached prefix again within 62.5 minutes, keep refreshing it with cheap reads; past that, let it expire and rewrite, because a 5-minute cache write costs 1.25x base input and a read costs 0.10x, so the ratio collapses to 5 * (1.25 / 0.10) regardless of model or prefix size.

He flags three cache footguns that silently torch the math: Opus 4.7 uses a new tokenizer that can balloon the same text by 35%, prefixes under the model's minimum (4,096 tokens for Opus, 1,024 for Sonnet) don't cache at all and fail silently, and the cache breakpoint only looks back 20 content blocks, so long agent transcripts need an earlier breakpoint to stay hit.

He extends the same model-independent math to /compact, where break-even depends only on the compression ratio: roughly 10:1 pays back in 8 turns, 5:1 in 17, and anything closer to 2:1 is just an expensive summary that also risks dropping the error message your agent will have to rediscover. |

| | | | | | 👉 Got something to share? Create your FAUN Page and start publishing your blog posts, tools, and updates. Grow your audience, and get discovered by the developer community. |

|

|